AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Create deep fake11/19/2023

Static subject: our colleagues spotted that the speaker was oddly robotic.

Sibilant sounds are especially problematic.ģ. Mouth shape and sibilant sound issues: native French speakers were particularly attuned to this: the shape of the mouth and lips is spot on in parts, but less convincing in others.

Audio to video synchronization: our newsroom tackles sync issues all the time, but in this case the video appears to slip in and out of sync with the audio, which is extremely unusual.Ģ.

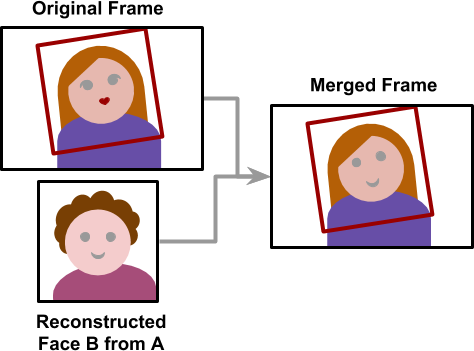

Some of my colleagues knew I was working on this project, and they were quick to identify the red flags. However, I did not wish to plant this content on social media, so I simply sent the video via a chat app to my colleagues, so they could view it on a mobile screen, and I asked for their reaction. We trace it back to the source, and we ask endless questions. We consider where, why and how it was shared. Normally when we attempt to verify video, we don’t look at a clip in isolation. It also gave us a piece of synthetic video that we could start showing to colleagues. But clearly even a small change, such as a few extra billion here or there, could matter a great deal in a real-life scenario.Ĭreating this video was an education in itself: we learnt what source material required to give the most convincing results. In our example we had the French script match the English. We may have literally put words in her mouth, but our English colleague was left speechless when she watched herself talking with the voice of her French counterpart. We gave both sets of video files to the technology start-up, and it took them just a few hours to map the French mouth movements and use generative AI to recreate the whole lower portion of our English-speaking colleague’s face. Next, we enlisted the help of our French colleague, who repeated the process in her native tongue. It’s a common set-up for broadcasters.Ī colleague of ours agreed to help us out, and we filmed her giving a convincing performance outlining her imaginary company’s upcoming expansion plans. We picked a situation in which this could be the case: an interviewee speaking “down-the-line” to a reporter from a remote studio. For me the real risk came when there was only one camera in a room, and few witnesses to back up what was actually said. But I know every time such figures speak there is very often row of cameras on them, plus a team of aides. Previous deepfakes created by academic teams have involved public figures like Barack Obama and Angela Merkel. This came in the form of a London-based startup that uses generative AI to create “reanimated video”, allowing for seamless dubbing into any language. Through our research into the topic, it was clear we would need some technical expertise. The aim of this was not to trick our colleagues, but rather to consider how our verification workflows might be tested with a piece of video like this. That’s why I decided to join forces with Nick Cohen, Global Head of Video Product at Reuters, to create our own piece of synthetic video as part of a newsroom experiment. I also know though my experience of training journalists in verification techniques that delving into real-life examples is the best way to learn.īut when I looked into the world of deepfakes, I found very few news-relevant examples to study. When approaching third-party content, I like to think in terms of worst-case scenarios: how could this video, or the context it has appeared in, been manipulated? Therefore, the potential verification challenges posed by this new type of fake video were something I have to take seriously. It’s not hard to see how the possibilities arising from this are serious – both for the individuals concerned, and for the populations targeted by a fake video. These ‘deepfakes’ (the term a portmanteau of “deep learning” and “fake”) are created by taking source video, identifying patterns of movement within a subject’s face, and using AI to recreate those movements within the context of a target piece of video.Īs such, effectively a video can be created of any individual, saying anything, in any setting. One new threat on the horizon is the rise of so-called “deepfakes” – a startlingly realistic new breed of AI-powered altered videos. It’s a challenge to verify this content – but it’s one we are committed to undertaking, because we know that very often the first imagery from major news events is that captured by eyewitnesses on their smartphones: it’s now an integral part of storytelling.Īs technology advances, so does the range of ways material can be manipulated. Every day, the specialist team of social media producers I lead at Reuters encounters video that has been stripped of context, mislabeled, edited, staged or even modified using CGI.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed